Bing just made a useful distinction for anyone working on AI search visibility. A classic search engine ranks pages. An answer engine needs grounding. That sounds subtle, but it changes what technical SEOs and content teams should inspect. Ranking asks which document deserves attention. Grounding asks which pieces of evidence are safe enough to support a generated answer.

This article covers the same underlying topic as my German KI-Radar piece, but it is written as a separate SEO Experiments version. The framing here is more technical and more implementation-oriented: what should change in your crawl checks, page templates, content QA, and measurement workflow if the index is no longer only a page-ranking system?

Microsoft's Bing team published Evolving role of the index: From ranking pages to supporting answers on May 6, 2026. The post argues that an index now has to do more than retrieve and sort web pages. It also has to support AI systems that synthesize answers. SEO Sudwest summarized the same distinction in German in Der Unterschied zwischen Ranking und Grounding: Bing erklaert.

The practical takeaway is not that ranking is dead. It is that ranking is no longer the whole job. If a search product returns a list of results, the user can scan, click, compare, and correct. If an AI system turns indexed material into a direct answer, the system needs stronger evidence hygiene. It must know whether a fact is current, whether it came from a reliable source, whether two sources disagree, and whether the answer should be withheld instead of guessed.

Ranking and grounding optimize different units

Traditional ranking works mostly at the page and result level. A search engine evaluates documents, links, relevance, quality, freshness, intent fit, and many other signals. The output is a ranked list. The unit that gets clicked is a URL. That URL may contain a mix of facts, opinions, examples, historical context, related links, product data, and disclaimers.

Grounding has a different pressure. A generated answer does not need the whole page in the same way. It needs answer-sized evidence: a date, a definition, a current price, a product constraint, a quote, a table row, a named entity, a policy statement, or a source-backed comparison. The retrieval system has to select fragments that are both relevant and safe to use. That means the page still matters, but the internal structure of the page matters more.

| Dimension | Classic ranking | Answer grounding |

|---|---|---|

| Main question | Which pages should the user visit? | Which facts can support a generated answer? |

| Primary unit | URL, document, result position, click probability. | Fact, passage, table row, entity, source, timestamp. |

| User role | The user verifies by clicking and reading. | The system must reduce ambiguity before answering. |

| Failure mode | Weak result, wrong click, poor SERP satisfaction. | Confident answer based on stale or incomplete evidence. |

| SEO focus | Crawlability, relevance, authority, links, snippets. | Crawlability plus provenance, freshness, chunk clarity, schema, and conflict reduction. |

Why this changes content QA

A page can rank while still being hard to ground. Think of a long article that explains a topic well, but mixes 2024 pricing, 2026 product updates, old screenshots, broad recommendations, and a footnote that limits the advice. A human can often infer which part is current. A retrieval system may split the content into chunks and lose the constraint. If the old price and the new product description sit too far apart, the grounded answer can combine them incorrectly.

This is where content QA should become more evidence-oriented. Every important claim should pass four checks: what is the claim, where is the evidence, when was it true, and what are the limits? If an article cannot answer those questions for its most important statements, it may still be readable, but it is not ideal grounding material.

Useful rule: if a fact would be dangerous when extracted without the surrounding paragraph, keep the context closer. Put the date, source, exception, and unit near the claim itself.

The technical SEO checklist gets stricter

None of this removes the old technical SEO work. It makes it less forgiving. AI search systems still need to discover URLs, fetch them, parse them, resolve canonicals, understand language, and see meaningful content in the initial HTML. If the page only becomes useful after client-side JavaScript has run, many AI crawlers and answer systems will have a weaker representation than a real browser user.

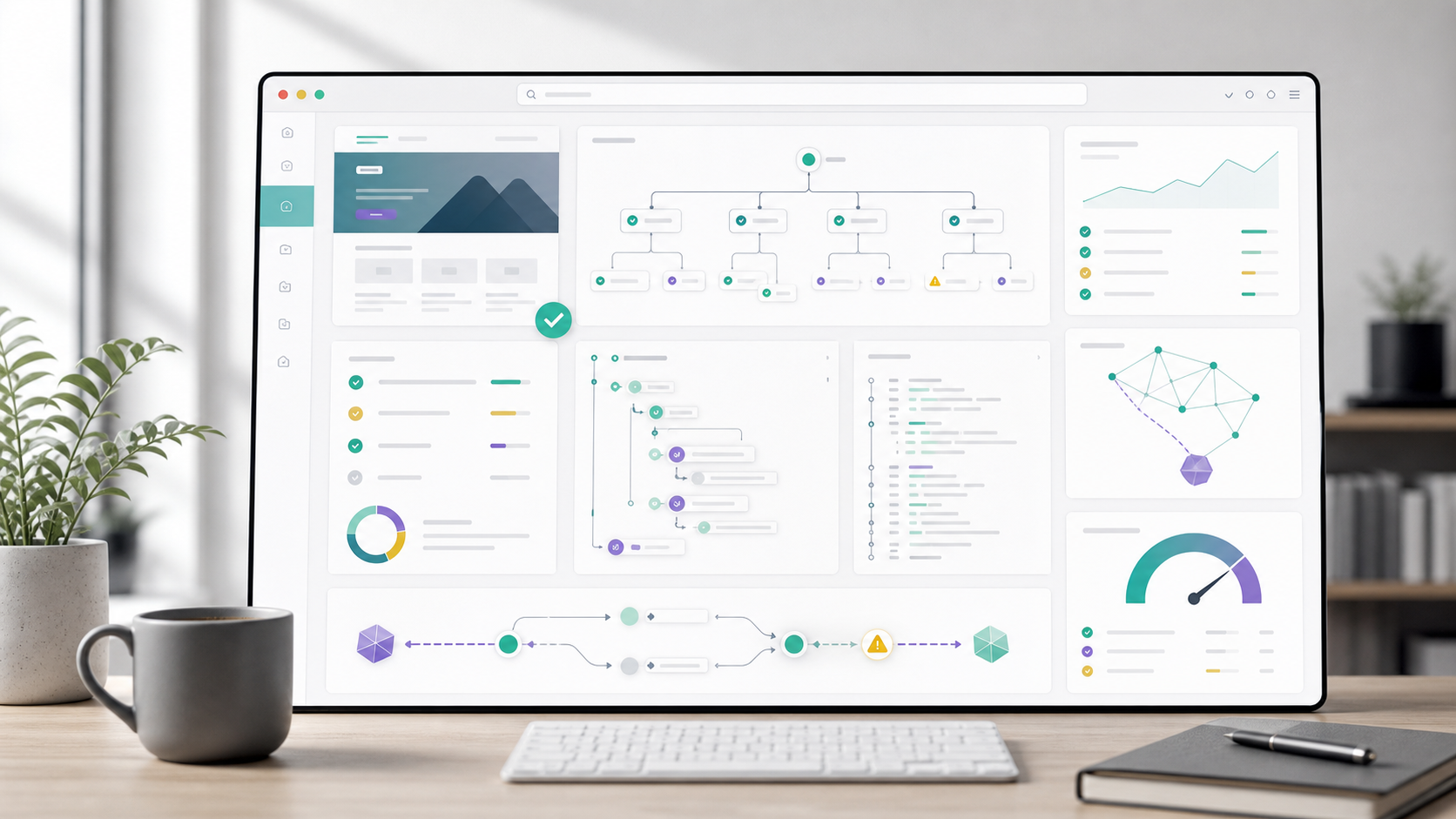

For SEO Experiments, I would split the audit into three layers. First, transport and access: can the relevant crawler reach the page without being blocked by robots.txt, a WAF, bot management, or broken redirects? Second, parseability: does the initial HTML contain the main content, internal links, meta tags, canonical tags, and structured data? Third, evidence quality: can the system isolate a specific answer with source, date, and limitation intact?

| Audit layer | What to inspect | Why it matters for grounding |

|---|---|---|

| Access | Status codes, robots.txt, CDN rules, WAF logs, sitemap inclusion. | No access means no evidence, no matter how good the content is. |

| HTML representation | View-source, curl output, SSR/SSG, canonical, headings, links, JSON-LD. | The indexed representation must contain the same core facts users see. |

| Chunk design | Short sections, descriptive headings, tables, lists, claim-source proximity. | Retrieval systems need self-contained evidence units. |

| Freshness | Updated dates, old claims, contradictory pages, stale product data. | Outdated evidence can directly create outdated answers. |

| Attribution | Author, organization, citations, source links, schema, entity clarity. | Answer systems need to decide whether the claim is worth citing or using. |

Grounding makes tables and precise labels more valuable

The old advice to "write for users" is still true, but it is not precise enough. Write so a user understands the page and so a machine can preserve the meaning when the page is split apart. Tables are useful because they bind values to labels. Lists are useful because they define steps and priorities. Descriptive headings are useful because they create retrieval boundaries. Schema is useful because it declares entities, authorship, dates, products, questions, answers, and organizations explicitly.

This does not mean every article should become a database dump. It means that important facts should not hide inside ornamental prose. If you publish pricing, say what currency, region, plan, date, and source it belongs to. If you publish a legal or medical statement, make the jurisdiction and date obvious. If you publish a tool comparison, separate observed facts from editorial recommendations.

Risk pattern: one URL with many mixed topics, old and new information, missing dates, and vague claims. That page may still rank, but it is poor grounding material because extracted passages can lose context.

How to measure this without an official AI Search Console

Ranking has mature measurement. Grounding does not. There is no universal dashboard that tells you which exact passage was used by Copilot, Bing Chat, ChatGPT Search, Perplexity, Gemini, or another answer system. So the workflow has to combine indirect evidence.

Start with logs. Which AI and search crawlers request which URLs? What status codes do they receive? Do they fetch sitemaps, canonical pages, old redirects, or thin pages? Then test representation. Use curl, view-source, and rendering comparisons to see whether the facts are present in the first response. Then run answer tests, but treat them as samples, not truth. A generated answer is a product output, not a complete measurement system.

The most useful internal dashboard is often simple: important URL, last updated date, indexability, initial HTML quality, structured data, source coverage, known stale facts, bot access, and answer test notes. That turns AEO from a buzzword into a repeatable QA process.

What I would change first

If you manage a content site, I would not start by rewriting everything for AI. I would start with the pages that already matter. Take the 20 URLs that drive rankings, leads, citations, or brand authority. For each URL, identify the five claims an answer engine is most likely to reuse. Then check whether those claims are current, source-backed, and close to their context.

For a SaaS site, this may be pricing, integrations, limits, data handling, and use cases. For a publisher, it may be dates, quotes, statistics, named people, and event timelines. For ecommerce, it may be availability, variants, delivery, returns, and specifications. For technical SEO content, it is usually definitions, crawler behavior, implementation steps, and caveats.

Conclusion: the index is becoming an evidence layer

Bing's distinction is useful because it avoids the lazy claim that AI search is just "SEO with a chatbot UI." The index still ranks pages, but it also has to support answers. That second job requires different quality controls. The indexed web is no longer only a map of possible destinations. It is also an evidence layer for systems that speak on behalf of the retrieved material.

The practical response is not to abandon SEO. It is to make SEO more precise. Keep pages crawlable. Keep HTML meaningful without JavaScript. Keep canonicals and sitemaps clean. Add structured data where it matches reality. But also make claims easier to extract safely: current facts, clear sources, tight context, clean tables, explicit dates, and fewer contradictions across your own site.

Ranking answers "which page should a person inspect?" Grounding answers "which evidence can a system responsibly use?" The sites that understand both questions will be easier to rank, easier to cite, and easier for AI systems to use without inventing missing context.