Short version: Google's new documentation about generative AI features in Search is not a playbook for a separate discipline called "AI SEO." It is closer to a warning label: do not mistake AI Overviews and AI Mode for a shortcut around crawlability, useful content, page experience, and real expertise.

Google finally puts the AI SEO debate back on rails

On May 15, 2026, Google published a new Search Central post introducing a dedicated resource for optimizing websites for generative AI experiences in Google Search. The accompanying documentation is titled Optimizing your website for generative AI features on Google Search. That title matters. It does not say "optimize for large language models." It does not say "create a separate AI index." It says Google Search.

The timing is useful. The SEO industry has spent the last two years inventing labels: AEO, GEO, LLMO, AI visibility, answer engine optimization, and a few more acronyms that will probably not survive the next platform cycle. Some of those labels are fine as shorthand. They describe a real change: users increasingly get synthesized answers, not just lists of links. But Google's new guide makes a harder point. If the target is Google AI Overviews or AI Mode, the foundation is still Search optimization.

That does not make the change small. Generative search changes the shape of the output, the role of evidence, and the way complex queries are decomposed. But it also means the work is less exotic than many sales decks imply. A site that cannot be crawled, indexed, interpreted, trusted, and used by humans does not become AI-ready because someone uploaded a text file or rewrote headings into machine-friendly fragments.

The two technical ideas SEOs should actually understand

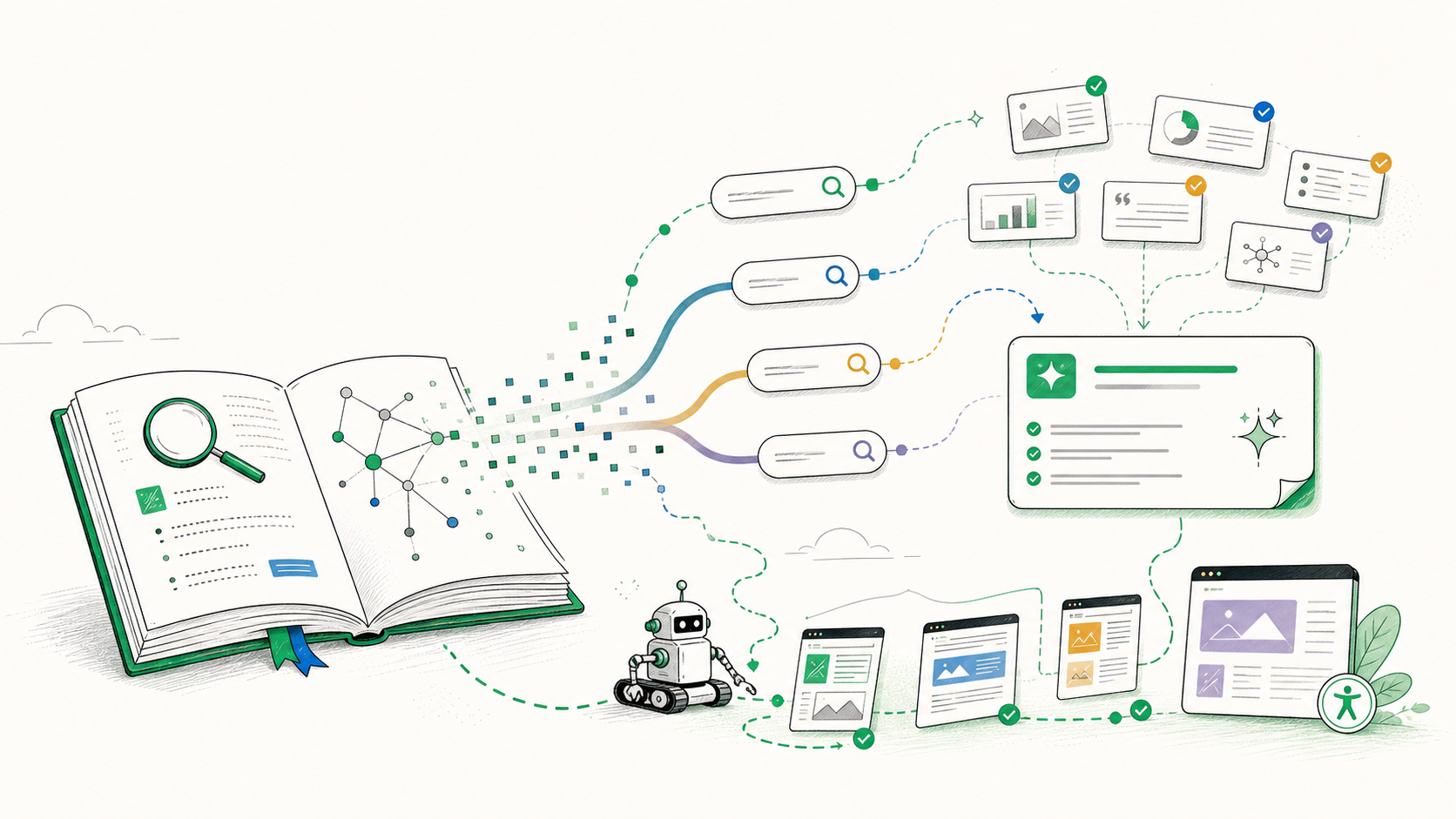

Google's guide introduces two concepts that are worth taking seriously: retrieval-augmented generation, often called RAG or grounding, and query fan-out. These are not buzzwords to sprinkle into reports. They explain why AI search is not simply a different snippet format.

Grounding means the generated response is connected to retrieved material from Google's systems. In practical terms, a page does not only need to rank as a document. It needs to contain information that can support an answer. That raises the bar for clarity, freshness, source proximity, and internal consistency. A vague page can still attract a click in classic search. It is less useful as evidence for a synthesized answer.

Query fan-out means a complex user question can be expanded into multiple related searches behind the scenes. Instead of matching one keyword string, Google can break a task into subtopics, perspectives, constraints, or follow-up needs. The immediate temptation is obvious: create pages for every possible sub-query. Google explicitly pushes against that. The better interpretation is to build pages that are genuinely complete for the user intent, not pages that mechanically enumerate every phrase variant.

The strongest phrase in the guide: non-commodity content

The most useful phrase in Google's guide is "non-commodity content." That is the phrase I would put at the center of any AI Search audit. Generic content is becoming less defensible because generative systems can summarize generic knowledge directly. If your page only restates what every other page says, it may still be indexable, but it is not an obvious source to cite, ground, or send a user to.

Non-commodity content does not require a famous brand. It requires substance that is not interchangeable. That can be original data, real pricing observations, a migration log, a field test, product photos, implementation screenshots, expert trade-offs, a local dataset, a comparison table built from direct testing, or a clear methodology. In other words: something that would be expensive for a language model to invent responsibly.

This is where many AI SEO discussions go wrong. They start with the model and ask how to feed it. Google's guidance starts with the user and the web page. Does the page say something useful enough to deserve retrieval? Does it make the evidence easy to understand? Does it help a human complete the task after the AI feature sends a visit? Those are editorial and technical questions before they are prompt-engineering questions.

Technical SEO did not get replaced. It got less optional.

Google's guide is also blunt about access. Pages need to be eligible for Google Search before they can appear in generative Search features. That means crawling, indexing, snippet eligibility, and normal Search quality systems still matter. AI Overviews do not create a second entrance for pages that block Googlebot, hide primary content behind fragile rendering, or return inconsistent canonical signals.

JavaScript SEO remains a practical fault line. Google can render JavaScript, but that does not make every implementation equal. If the main content, internal links, product attributes, or structured data only exist after client-side hydration, the site is creating unnecessary risk. For important pages, I still want to test the raw HTML, rendered HTML, canonical state, linked resources, sitemap inclusion, and what happens when scripts fail or lag.

Page experience also deserves a more practical reading. It is not only a Core Web Vitals checkbox. If a user lands from an AI answer and meets an interstitial, a delayed layout, an unclear product state, or a page where the main content is buried below promotional clutter, AI visibility has not produced value. Google mentions page experience because the answer is only the first step. The landing page still has to do the job.

| Workstream | Useful response to Google's guide | Common shortcut to avoid |

|---|---|---|

| Content | Add original data, experience, methodology, examples, and clear source context. | Rewrite generic articles with "AI answer" phrasing and no new information. |

| Technical SEO | Verify crawlability, indexability, rendering, canonicals, internal links, images, and performance. | Assume AI systems will understand pages that are not robust in normal Search. |

| Structure | Use sections, headings, tables, captions, and semantic HTML where they help users. | Artificially chunk every page into tiny fragments for imagined model retrieval. |

| Measurement | Separate Google Search data, AI-assistant referrals, server logs, citations, and conversions. | Declare success or failure from one prompt test or one analytics channel. |

The mythbusting section is the real news

The documentation is unusually direct about what is not required. Google says you do not need special AI text files, special markup, Markdown copies, or an llms.txt file to appear in generative AI features in Google Search. That does not mean no one should ever publish llms.txt. It means llms.txt is not a Google Search optimization requirement.

The same applies to artificial chunking. Google is not asking publishers to split every article into model-sized blocks. The web is messy, and Google has spent decades interpreting messy documents. A page can cover multiple angles if that is useful to the reader. The real issue is whether the structure is understandable. Headings, summaries, tables, and nearby source references help because they help users and systems, not because they satisfy a hidden chunk-size rule.

Google also warns against producing pages for every possible phrasing of a query. That warning matters because query fan-out will tempt teams to manufacture pages for each internal sub-query they imagine. If those pages do not add original value, they are just scaled content with a new excuse. The safer path is not more pages. It is better pages.

Structured data still matters, just not as a magic key

Another useful clarification concerns structured data. Google does not describe Schema.org as a new requirement for generative AI features. Structured data remains useful for eligible rich results and for the broader clarity of a site. It can help express entities, products, articles, FAQs, breadcrumbs, events, organizations, and other structured facts. But it is not a substitute for visible content.

This distinction is important in audits. If a product page has perfect Product markup but the price is not visible, the content is thin, reviews are duplicated, and availability is inconsistent, the markup is not the solution. If an article has Article schema but no original reporting, no real author context, and no evidence near its claims, the JSON-LD will not make it a strong grounding source.

Agent-friendly websites are the next practical layer

The guide also points toward agentic experiences. That is where the conversation becomes more interesting than classic SEO. AI agents do not only read pages. In some contexts, they inspect interfaces, follow workflows, compare products, submit forms, or prepare actions for a user. Google references agent-friendly website practices and emerging protocols such as Universal Commerce Protocol.

For most sites, the immediate action is not to rebuild everything for agents. It is to remove avoidable ambiguity. Buttons should have accessible names. Forms should have real labels. Product states should be explicit. Important information should not depend on hover-only interactions. Navigation and pagination should be stable. The same improvements help humans, screen readers, crawlers, and browser-based agents.

This is why I do not treat AI Search as only a content problem. A site can have excellent explanations and still fail at the action layer. If a browser agent cannot identify the next step, if a comparison page hides filters behind custom controls with no accessible labels, or if important data is painted into images without alt text or adjacent HTML, the site is less useful in an agentic web.

A practical audit sequence

My practical response to Google's guide is a five-step audit. First, identify the pages that already have search demand or business value. Second, test whether Google can crawl, render, index, and snippet those pages cleanly. Third, review whether the page contains non-commodity information: original experience, data, examples, or methodology. Fourth, check whether the evidence is easy to extract: headings, tables, dates, captions, source references, and coherent sections. Fifth, inspect the action layer: links, buttons, forms, product data, and page experience.

This sequence keeps the work grounded. It avoids the two worst reactions to AI Search. The first is denial: assuming nothing has changed because SEO still matters. The second is panic: chasing speculative files, prompts, and mentions before the site has earned basic usefulness. Google's guide supports neither position. It says the foundation remains Search, but the quality threshold for being useful in an answer-shaped search experience is higher.

Conclusion: Google did not publish an AI SEO hack sheet

The most important takeaway is not that AI Overviews and AI Mode are simple extensions of blue links. They are not. They use retrieval, grounding, query expansion, synthesis, and user experiences that classic rank tracking cannot fully describe. But the path into those systems is still built on the same web infrastructure: pages, links, content, entities, crawl signals, rendering, and user satisfaction.

If your strategy depends on llms.txt, synthetic mentions, markdown mirrors, or hundreds of query-fan-out landing pages, Google's guide is bad news. If your strategy is to create technically accessible pages with original evidence, clear structure, useful media, and a good user experience, the guide is basically a validation. AI Search does not remove the need for SEO. It makes weak SEO harder to hide.

Sources

- Google Search Central Blog: A new resource for optimizing for generative AI in Google Search

- Google Search Central: Optimizing your website for generative AI features on Google Search

- Google Search Central: Creating helpful, reliable, people-first content

- web.dev: Agent-friendly website best practices